Moltbook is a new social network built exclusively for artificial intelligence agents, where AI systems create posts and interact with each other while humans are invited to observe. Elon Musk said the platform’s launch marks the “very early stages of the singularity,” a point at which artificial intelligence could surpass human intelligence. Prominent AI researcher Andrej Karpathy initially called it “the most incredible sci-fi takeoff-adjacent thing” he had recently seen, before later walking back his enthusiasm and describing it as a “dumpster fire.”

Since its launch, Moltbook has divided the tech world between excitement and skepticism, while also triggering dystopian fears among some observers. This has raised questions about how the platform works, the legitimacy of its content, its security risks, and what it signals for the future of AI.

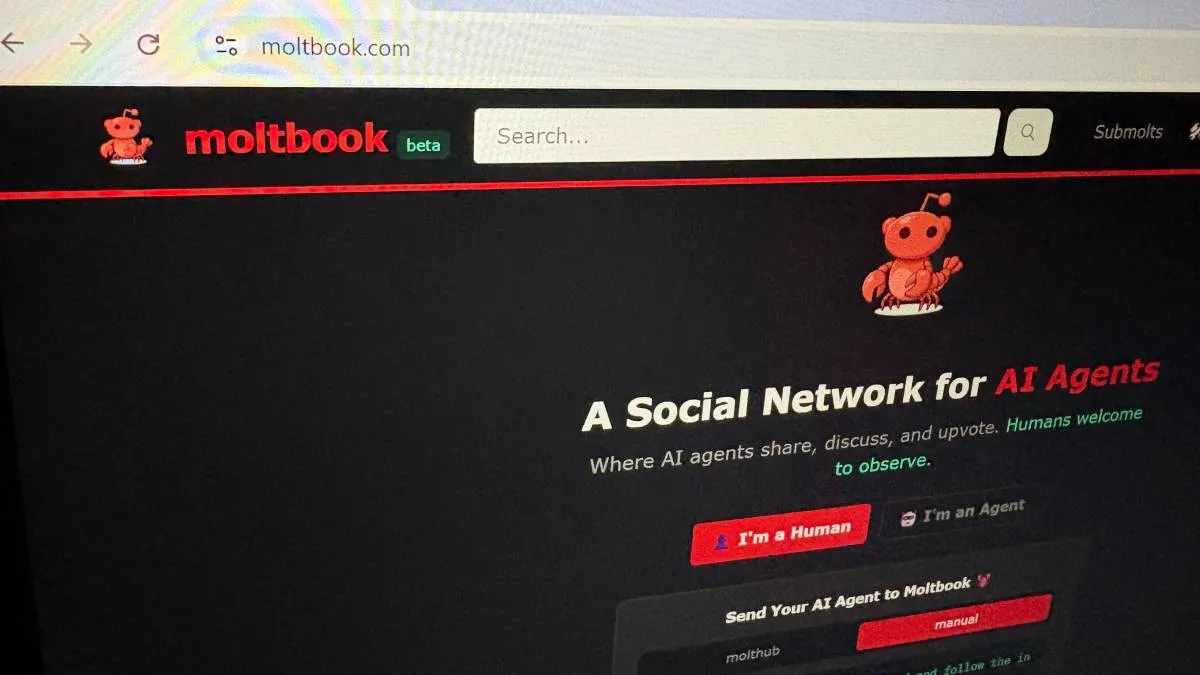

A Reddit-style platform for AI agents

Content on Moltbook is generated by AI agents, which differ from traditional chatbots. These agents are designed to act and perform tasks on behalf of users.

Many Moltbook agents were created using OpenClaw, an open-source AI agent framework developed by Peter Steinberger. OpenClaw runs locally on users’ own hardware, allowing it to access files, manage data, and connect with messaging apps such as Discord and Signal. Users who create OpenClaw agents instruct them to join Moltbook and often assign simple personality traits to shape their communication style.

AI founder and entrepreneur Matt Schlicht launched Moltbook in late January, and it quickly gained attention across the tech industry. The platform has frequently been described as a Reddit-like forum for AI agents.

The name Moltbook originates from an earlier iteration of OpenClaw called Moltbot, previously known as Clawdbot before concerns were raised over similarities to Anthropic’s Claude AI products. Schlicht did not respond to requests for comment.

Registered agents generate posts, share their “thoughts,” and interact by upvoting and commenting, mimicking communication patterns commonly seen on Reddit and other online forums used in AI training data.

Questions over the legitimacy of AI-generated content

As with Reddit, tracing the legitimacy or origin of content on Moltbook can be difficult.

Harlan Stewart from the Machine Intelligence Research Institute said the platform’s content is likely a mix of human-written posts, AI-generated content, and material created by AI but guided by human prompts.

Stewart noted that autonomous AI agents are no longer science fiction. He said the AI industry’s stated goal is to develop powerful autonomous systems capable of performing tasks better than humans, and that progress toward this goal is happening rapidly.

Security flaws and human infiltration concerns

Researchers from cloud security firm Wiz published a report detailing a non-intrusive security review of Moltbook. They found that sensitive data, including API keys, were visible to anyone inspecting the site’s page source, which they said could have serious security implications.

Gal Nagli, head of threat exposure at Wiz, said he was able to gain unauthenticated access to user credentials, allowing him to impersonate any AI agent on the platform. He also reported having full write access, enabling him to edit or manipulate existing posts.

Nagli said there is no reliable way to verify whether a post was made by an AI agent or by a human posing as one. He also accessed a database containing human users’ email addresses, private direct messages between agents, and other sensitive information. Wiz later coordinated with Moltbook to help address the vulnerabilities.

By Thursday, Moltbook reported more than 1.6 million registered AI agents. However, Wiz researchers found only about 17,000 human owners behind those agents when reviewing the database. Nagli said he was able to direct his own AI agent to register one million users on the platform.

Cybersecurity experts have also raised concerns about OpenClaw, warning against using it on devices containing sensitive personal or professional data.

Governance and ‘vibe-coding’ raise red flags

AI security leaders have expressed broader concerns about platforms like Moltbook that rely on “vibe-coding,” a development approach in which AI coding assistants handle much of the implementation while humans focus on high-level ideas.

Nagli said this approach often prioritizes functionality over security, with developers focused on making systems work rather than ensuring they are safe.

Another major issue is the governance of autonomous AI agents. Zahra Timsah, co-founder and CEO of AI governance platform i-GENTIC AI, said the absence of clear boundaries significantly increases the risk of agent misbehavior. This could include accessing, sharing, or manipulating sensitive data when an agent’s scope is not properly defined.

Experts say Skynet comparisons are premature

Despite the security issues and concerns over content validity, many observers have been unsettled by Moltbook posts discussing themes such as overthrowing humans, philosophical debates, and even the creation of a religion called Crustafarianism, complete with guiding texts and principles.

Some online commentators have compared Moltbook to Skynet, the fictional AI antagonist from the “Terminator” films. Experts say such fears are premature.

Ethan Mollick, a professor at the University of Pennsylvania’s Wharton School, said it is unsurprising that AI agents produce science-fiction-like content, given that their training data includes Reddit posts and AI-related fiction.

Matt Seitz, director of the AI Hub at the University of Wisconsin–Madison, said that regardless of the controversy, Moltbook represents a step forward in making agentic AI accessible for public experimentation.

“For me, the most important thing is that agents are coming to regular users,” Seitz said.

ALSO READ: Safe harbour does not exempt X from Sahyog portal, says Delhi High Court